Artificial Intelligence

Artificial intelligence (AI) is a very beautiful thing once you ever dive into its complexity .This branch of computer science, is purely dedicated to creating intelligent machines capable of performing tasks typically requiring human intelligence, has evolved from a theoretical concept to a pervasive reality. AI encompasses a vast array of technologies, including machine learning, deep learning, natural language processing, computer vision, robotics, and more, each contributing to its remarkable progress.

Recurrence Neural Network

Echo State Networks: A Reservoir of Power for Sequential Data

May 3, 2024

Problems like 'Long Term Dependencies' and 'Vanishing Gradient' can be solved with LSTMs and GRUs (as we traditionally follow these) but actually they reduce the vanishing gradient problem to some extent by explicitly modelling long-term dependencies as always they require careful initialization and training procedures to ensure stable learning . But there is another approach, the ESNs or Echo State Network.The analogical 'reservoir' refers to the recurrent layer of randomly connected neurons .It serves as a dynamic memory that captures and processes the temporal information from the input data where the reservoir neurons are typically densely interconnected with random weights (which remains unchanged during the training session) .

Cruciality of Recurrence Connection and BPTT in Recurrent Neural Network -

Feb 24, 2024

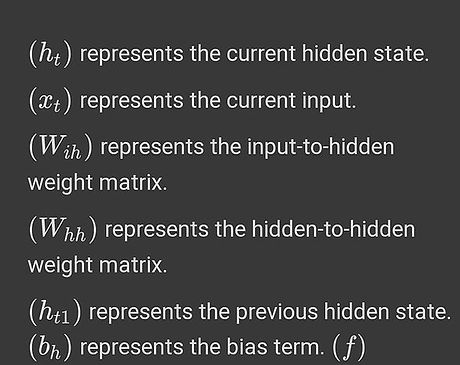

We are already familiar with RNNs . But their ability to maintain memory over time give it an opportunity over led them to be used in LLMs for decades RNNs can be computed by unfolding them over time and applying the standard backpropagation algorithm to compute gradients and update

Long Short-Term Memory (LSTM) in Recurrent Neural Networks: Learning Long-Term Dependencies for Language Translation

May 5, 2025

The vanishing gradient problem is a challenge encountered when training deep neural networks, including recurrent neural networks (RNNs). It occurs when gradients become extremely small during backpropagation, making it difficult for the model to learn long-range dependencies. In the context of RNNs, this problem is especially pronounced when dealing ....

.png)